How does ChatGPT connect to a 1-click crew change, in how AI-based tools will change complex enterprise workflows?

§Where it started

89 days, or 12 weeks ago, Nick - my co-founder and our CEO - sends me this link.

Implied was a question that he later made explicit over coffee - can we do this? Can we make a 1-click crew change? Can we, in a single click, decide where to stop a vessel, which flights to buy, which visas to consider, which agents to engage, and which seafarers to board and disembark at what time - such that a crew change happens?

My first reaction I think, was just shock at the sheer audacity of this question for simply existing in the world. A crew change - an exchange of fresh crew with people that are coming to the end of their contract and being flown home - is a complex dance of real-world restrictions, changing prices, ship routing, fuel estimation, and many more things.

Could it be automated? This is a question I was used to wondering about, but in the occasional sense; a lot like wondering about designer babies and interstellar travel. Maybe someday - and we're definitely getting closer - but how absurd to ask if it can be done tomorrow!

The next instant had me realizing that we could do it - that for the first time in three years, we had solved enough problems that we could deliver a 1-click crew change. Not without issue, and not as a replacement for the men and women who do this job, but it was possible to deliver this as an aid that helped improve things.

It wasn't a high priority, so it took me a week to double-check my assumptions and another week to figure out the UI into some sketches:

Two weeks after the message, we had it in the development schedule:

Three weeks on we had the feature merged to production,

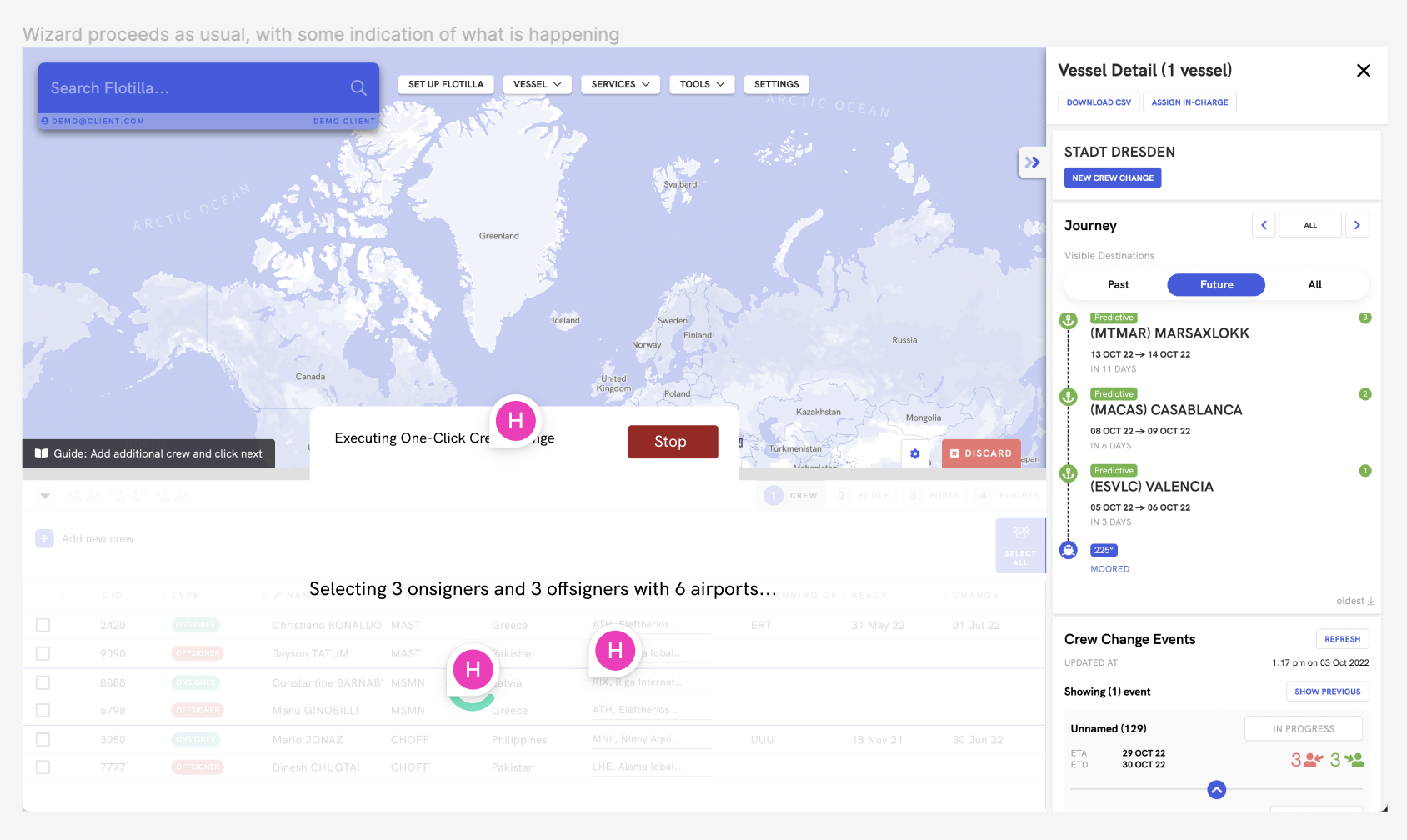

and had conducted the world's first one-click crew change assessment:

The interesting thing was that we then released it without much fanfare. You could get a crew change assessment in a single click, but it was never 100%. We didn't want to talk about something if we couldn't promise a strong user experience that always improved things. At release, it was more meaningful as a technology demonstrator to us than it was to our customers - or so we felt.

So why talk about it now? Is it better? Yes - but not enough that I would consider the results at a hundred percent. I'm writing about it now because it has changed the way we work - in a way I think relates to the future of AI-driven tools. I'm writing about it because it has shown us a new workflow - a corrective one instead of a constructive one - that I think holds a lot of potential in AI-based automation.

I'd also like to talk about the problems and solutions we've gone through to actually get this far. If you want to skip ahead to the part about corrective workflows - and I've done my best to make as much of it make sense despite your cruel skip - you can click here.

§Problem Zero - Relationships

Maritime is an industry with a large number of players - big and small - stratified into different parts of the industry. A single crew change might involve multiple manning agents, crew managers, technical managers, travel agents, port agents, and more. These are often people that work for different companies that sit in different offices in different countries. Trying to get a sense of the right decision means understanding not only the workflows and preferences of each individual and company, but connecting to the massive repository of (analog) information they use to do their jobs.

Trying to build software for vessel operations, we weren't travel agents, manning agents or crew managers - and we certainly didn't know what the good ones knew after decades of experience. We needed them to teach us, and to work with us to bring their expertise and knowledge online.

This means the same thing it does in any industry - relationships. Partnerships and relationships have been, in retrospect, the thing we have been most successful at as a company. Being able to connect to multiple companies in each segment has made the process much smoother. So has our offering, which is a completely new product in the crew intelligence vertical - which I'm pretty sure we invented. (It was, however, a vote of confidence to hear this year that we have some competition in this vertical).

I don't think any of the further problems I will list could have had a chance at troubling us, had we not solved this one. Had it not been for the wonderful first customers and partners in this space taking the time and effort to teach us how to do things their way, and to make sense of what they knew, we would never have gotten off the ground.

§Problem One - Data

This is something I've written about before, so I'll be brief. After we had found partners that were willing to let us use their data, we had the problem of the data itself. Most of maritime data works on an honor system, which means lots of typos, false and invalid rows, and not always knowing which is which. This was also around the time that I discovered that none of the companies I worked with agreed on a list of countries in the world.

This is a problem we're still solving today, but it's also one we have gotten exceedingly good at. We have ways of incorporating probabilistic estimates of data, adding aliases so things can have multiple references, compond primary keys so multiple joined fields can be used to refer to the uniqueness of something, on and on.

It's often said that the FAA's regulations for aircraft are written in blood. Our data processing code and guidelines are similarly, written in bugs - each line and procedure exists because something about the underlying data caused problems that needed this fix.

§Problem Two - Algorithms

The next step - also covered in zero to one problems in maritime, is that we needed to build infrastructure that was only tangentially related to the problem we were solving. Some of these problems were products or companies in their own right, but they were also lego blocks we needed in solving crew changes. An example is routing. Another is building translation engines for timezones related to flights, connections, ports, etc. Today, we have a routing engine, fuel table processor, currency converter, and many other modules that simply serve to transform data into useful prediction blocks for automation.

§Problem Three - Parameters & Preferences

Problems three and four have been the focus of 2022 for us - at least for the most part. If you solve the hard backend and data problems, you are now presented with the final parts of delivering a successful solution - UI/UX. This wasn't trivial in our case - I've expanded on two of the many problems with crew change interfaces before. If you'd like to read about them, look for button syndrome and telegraphing.

The short version is that crew changes have no real digital interface we can learn from, except Excel and Email. We borrowed from both, but neither lend themselves well to our priorities. What we wanted was an interface that was self-teaching - which indicated what the next logical action was, where to look for certain controls, and hid away anything the user's context did not need. If you were looking for flights, you did not need a port selector.

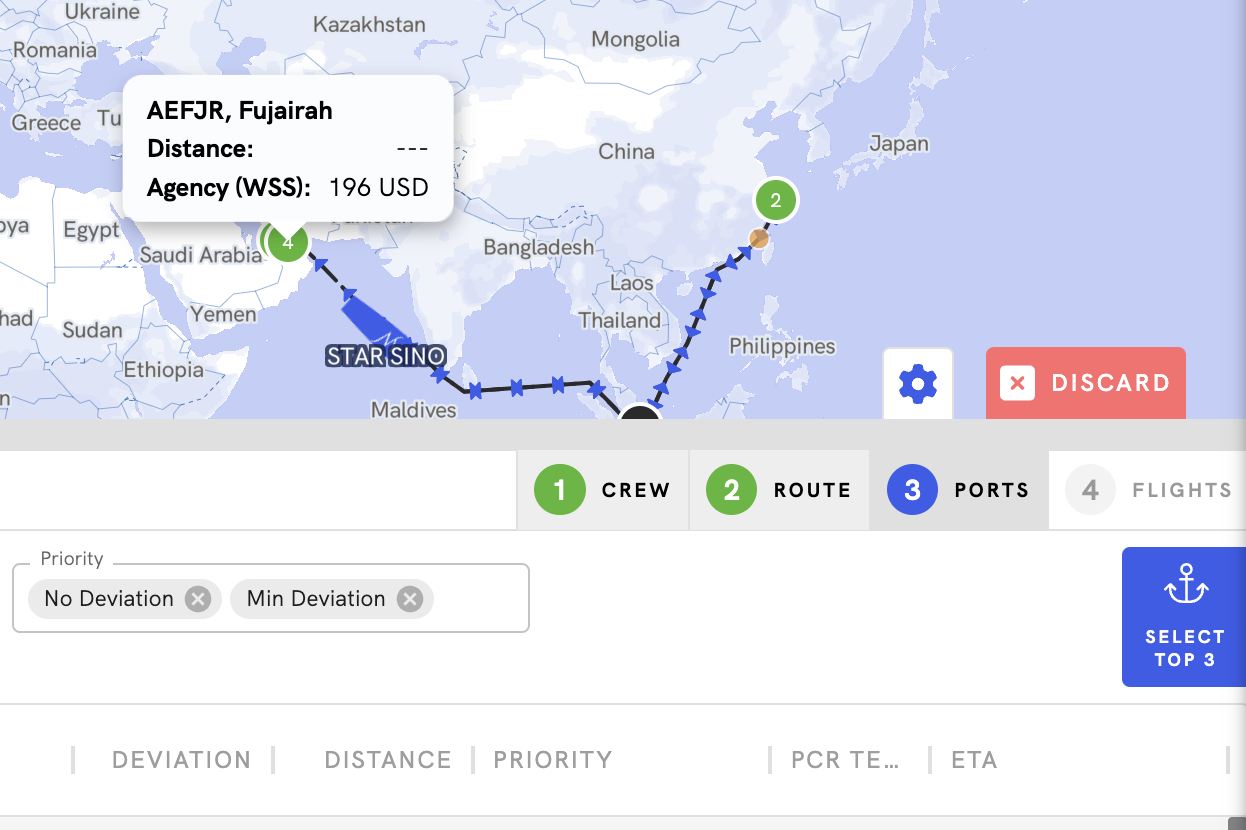

We ended up using the map as the base primitive - everything we displayed had coordinates, be it a vessel, a flight, a port or an airport. Building from this, we built a counterclockwise flow that moved you through the stages of planning a crew change - select crew, select ports, select flights and send out booking requests.

What this enabled us to do was to add slow nudges through each stage which added more advice and automation from us. For example:

Once we were relatively confident of our ordering of potential ports for a crew change, we could have the user click next to auto-select the top three ports from the list.

§Problem Four - Workflow

The final part is the workflow. How can we design something which supports 100% of use cases? What we found - or believed, given the lack of statistically significant data - is that 80% of cases could be simplified or automated entirely. Maybe another 10% could be covered with some more control over the process. The final 10% - especially the last 1% - needed absolute fine control over the choices being made, and this control was often critical. Looking for flights? Do you need to filter by airline? By layover time? By individal minimum layover? By overall flight length? By maximum layover?

The threat was that for the 80% of cases this complexity would prove to be a hassle that got in the way of users and scared new users into leaving.

Our solution so far has been to slowly add complexity as users ask for it - and to be aggresive about taking it away, or hiding it. Each new control gives us something we could potentially automate, if the rules surrounding it are simple enough.

§Connecting the strings

When you put these solutions together, and have them work well, a one-click crew change becomes pretty simple. In our case, our first solution was to literally click (in software) the next button on our wizard until we reached the end. 1-click Crew Change!

It's gotten more complex since then, but the basics are still the same. If the interface breaks down complexity, if your parameters and controls are self-tuning, if you have a data-aware system that understands input, and you connect to and understand the reasons for why people do what they do, automation becomes as simple as clicking Next. Anyone could do it.

Now for what we learned in the month or two it's been in production:

§What is a corrective workflow?

We run a lot - I mean a lot - of crew change assessments as a company. Multiple reports for QA, every time we test a related feature, demos - we have been one of the biggest consumers of our own product.

So it surprised me when the 1-click crew change became the default way we generated reports overnight. Instead of going through the stages of selecting crew, finding ports, picking flights, and adjusting filters, the workflow became:

Click 1-click Crew Change-> Click away to youtube or other distraction -> Come back in two minutes -> Fix what may not be to your liking on the report.

Turns out humans - or at least, us Greylings - prefer correcting machines rather than working with them. This is what I've come to call the corrective workflow - where some process of automation, be that ChatGPT (see I did bring it back), or another kind of AI-based generative tool, takes the first step of doing what it thinks you want. Only after this point does the UI kick in, and it's pointed towards letting you fix what was wrong, and to re-instruct the system for the future.

This is a rather powerful workflow that gets around the growing problem (which might take years) of AI, that things are unlikely to be perfectly accurate for a long time. Rather, they're unlikely to be acceptably accurate for a long time - simply because when it comes to machines, we have much higher standards of what is acceptably accurate. Ask self-driving cars.

The alternative is what I'll call the constructive workflow, where the user starts from scratch and the automation offers help or takes over at certain points.

Here's two examples to illustrate the difference.

A constructive AI-based writer would let the user start on a blank page, and offer suggestions to complete their sentence, or correct a word - or write entire paragraphs as they start them.

A corrective AI-based writer might write the entire email, and highlight the areas it was most unsure about (or it felt were most deserving of the sender's attention), and offer the opportunity for corrections, or provider alternatives. Each decision by the user can be used to improve performance in the future.

A constructive crew-change planner lets the user start planning on their own, and highlights options and settings to indicate the system's preference for what the ideal answers might be. Users can choose the recommended option, or go in a different direction.

A corrective crew-change planner emails users a crew-change plan as soon as it can, with a link to an interface where the plan can be critiqued and amended.

§What this means for the future

This is a workflow we will be experimenting with more in 2023. There are still some open problems, like how we incorporate user corrections into our automation, and how to make the UI for this feel smooth and intentional, that we intend to solve in the coming months.

As with a lot of things, I believe the complexity lies in the presentation to the user, and the implied workflow in the piece of software. We're still working on figuring it out.

Not a newsletter