Sometimes things are said in a relationship that you know will stick for a long time. My girlfriend said to me once that she needed me to communicate who I was, instead of who I wanted to be. She was right - when we had our differences, I would unknowingly argue from the position of who I wanted to be, rather than the person I knew I was in the present.

I've come to call this the aspirational self. We have many names for it - 'core personality', 'character' or 'values'. This is the stuff new years resolutions are made of. Our internal models of ourselves often correspond to who we want to be, and these models are often quite far from who we are in the day-to-day.

Too far and it can be delusional and disingenuous, but healthy distance - much like a healthy amount of debt for an economy - can be a good thing, even recommended. The aspirational self is what often pulls us forward, by reminding us of what needs work and improvement. It's the difference between us as we are and the people we know we should be that some of us call a conscience.

If we always acted in accord with our conscience, we wouldn't really need one - we could ascend to the heavens as a single unified being of pure light. Before that happens, we need ways to remind ourselves of who we want to be, and we've evolved many of those over thousands of years. Your mother, for example. I think most of us know the right thing to do in most situations, if we just imagined our mums standing over our shoulders.

Seriously, social pressure and expectations - even in something as small as being nice to a retail worker because you have company - can have a huge impact. Other humans remind us to work on being the people we want to be, and the people we think they want to be. This distance - this rubberband of a gulf - keeps pushing us forward into new territory and outside of our comfort zones, for good or bad.

They also put safeties on us. I can't order twenty drinks at most bars without someone asking me if I should stop, or taking my keys. However, the point I'm making is not that social safeties or peer pressure is a good thing - indeed it can be quite an insidious thing that conforms behavior. The point I am making is that this is what we're used to - and completely disconnecting it can be terrifying at a societal level.

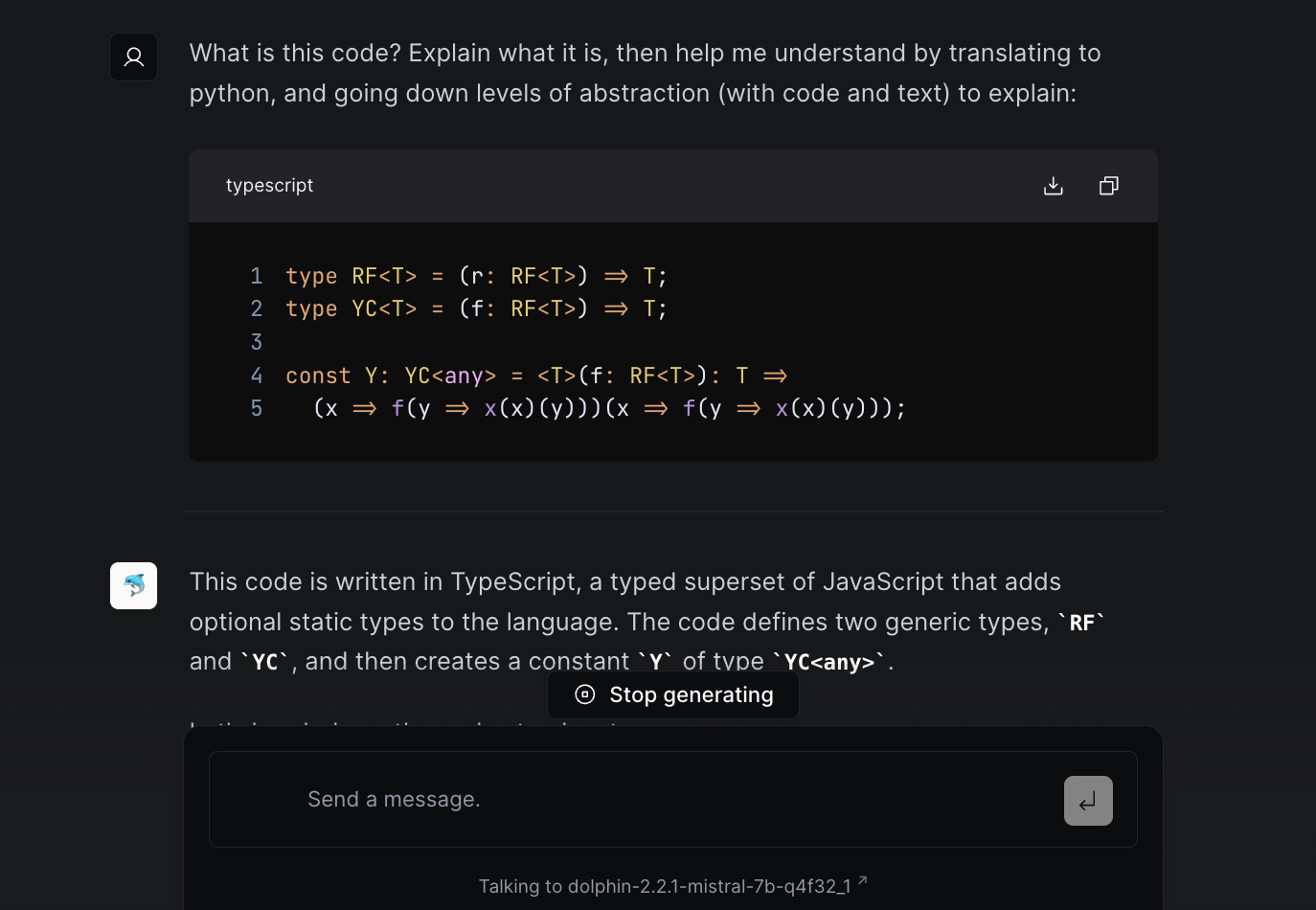

Indeed the lack of this very distance makes algorithmic services so bad for us. With painful, systematic clarity, they recognize us for who we are, and amplify it back to us. No room is left for the aspirational self, as the only thing measurable in tight feedback loops is who we are in each millisecond. These systems then encourage us to be more of what we are, while reassuring us that the people around us are just like us - which they are. No consideration remains for who we want to be, as a person or as a species.

On the other side, we force our artists and creators to make more of what we feel guilty consuming, as they face the hard decisions therein: doing what they want to do, or making more money in far less time. By intermediating ourselves with systems that can neither recognize or care for where we ought to go, we've made it impossible to advance.

Anyone who has felt embarassed at their personally curated feeds has felt this effect. The terrible effect this has on our young and widespread psychological issues are likely just the beginning, and I fear the tide has turned.

This is the problem. Doing something about it is a lot harder, especially because modern digital products are designed to work the same way capitalist products were intended to function. We vote with our wallets and our attention. While this may have functioned somewhat at larger volumes, it utterly ceases to when microtransactions of attention and money are involved. We are incapable of making repeatedly good decisions in split seconds, which is something any good solution will need to recognize.

§Finding a way forward

So what can we do? The most common things I hear involve digital detoxes, placing more care into what we use, and blaming fellow victims - creators and artists - for bending to the algorithms. While the first two no doubt help, they place responsibility and blame on the individual for a systemic problem. Heroin addicts can and do quit, but this shouldn't be your only solution to a crisis.

It's also a matter of responsibility, at a societal level. As the stewards of our own collective consciousness that we call the internet, we owe it to ourselves to see where things are going, and to apply corrective pressure.

I'm still unsure as to what a good solution is, but I'll list some of my thoughts below.

The UX of our products need change. More friction between desire and decision can help us reevaluate what we are and what we do. I find myself somewhat more sober on slower connections and machines, as the extra split second between wanting something and digging into the rabbit hole of having it makes me think. This is a hard thing to advocate for (that I certainly can't do to myself), but enforcing some distance at the UI level of deciding you want something and having it can have a positive effect.

The problem here is that in a fast-moving market, you will always be outcompeted by the faster solution. As a user, TikTok has won my attention more times than books, even though I know what I should be doing.

The solution might lie with top-down enforcement. Indeed my favorite or once favorite corners of the internet - like Hackernews - began with intense personal curation, and carried that fading light into their algorithms. Once the ball starts rolling, we've shown ourselves to be quite capable of pushing each other as a community quite some way before it slows.

But as dictatorial systems often go, they are only as good or bad as the B in BDFL.

On a personal level, time-delay systems have proved quite effective for me, both automated and manual. Putting away the things I want to watch, read or do for a little while - even as short as 30 minutes - has a tremendous effect in whether I want to do so after this time. The things I choose not to watch, when that time finally affords me some choice, I recognize as definitely not belonging to my aspirational self.

As both a creator and consumer of digital products, this is something I think about quite a bit. Do we build our systems to be addictive, or useful?

Not a newsletter